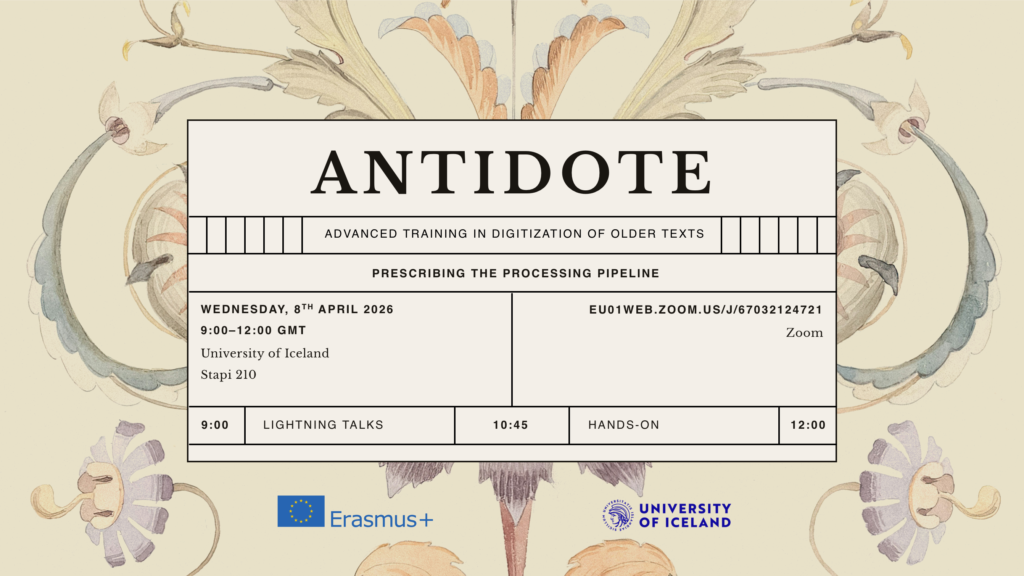

The Erasmus+ funded network ANTIDOTE (Advanced Training in Digitization of Older Texts) is pleased to invite students, scholars, archivists, librarians, and practitioners to our upcoming multiplier event: Prescribing the Processing Pipeline, to be held in Stapi 210 on Wednesday, 8 April 2026. 09:00-12:00.

This event focuses on the digitization pipeline from the physical manuscript to digital editions, visualization, and annotation. The purpose of this event is to foster collaboration between the University of Iceland, the National Archives of Iceland, the National and University Library of Iceland, and the Árni Magnússon Institute for Icelandic Studies, and the experts within the ANTIDOTE network.

Local institutions are invited to present their current digitization challenges while international experts will showcase the latest tools, methods, and solutions to overcome them.

The event is divided into two parts:

- Lightning Talks: A fast-paced series of presentations covering the phases of the digitization pipeline.

- A practical session where participants can gain experience with digital humanities tools including Transkribus (HTR), Manu (IIIF), and TEI Publisher.

The schedule is attached below.

Note on Attendance: The hands-on session will only be offered for on-site participants. Due to limited room capacity, in-person attendance requires prior registration. Participation is free.

ANTIDOTE – Advanced Training in Digitization of Older Texts

Multiplier event: Prescribing the Processing Pipeline

Digitization • Text Recognition • TEI Encoding • Publication • Visualization • Annotation

Wednesday, 8 April 2026, 09:00–12:00 GMT

University of Iceland, Stapi 210 and on Zoom

Welcome

09:00 Eiríkur Smári Sigurðarson, University of Iceland

Advanced Training in Digitization of Older Texts

Lightning Talks

09:10 Unnar Rafn Ingvarsson, National Archives of Iceland

Catalogues, collections and digitization 1

09:20 Halldóra Kristinsdóttir, National and University Library

Catalogues, collections and digitization 2

09:30 Katrín Lísa L. Mikaelsdóttir, University of Iceland

Handwritten Text Recognition

09:40 Hinrik Hafsteinsson, Árnastofnun

Optical Character Recognition

Coffee break

10:05 Haraldur Bernharðsson, University of Iceland

XML Encoding

10:15 Martin Roček, Charles University & IMAFO, ÖAW

Manu: Collaborative Annotation of IIIF Manuscripts

10:25 Ondřej Tichý, Charles University

TEI Publisher

10:35 Beeke Stegmann, Árnastofnun

Menota – Medieval Nordic Text Archive

Hands-on

10:45–12:00

Katrín Lísa L. Mikaelsdóttir, Martin Roček and Ondřej Tichý

Transkribus (HTR), Manu (IIIF) and TEI Publisher (TEI-XML)